Cross-Disciplinary Exploration

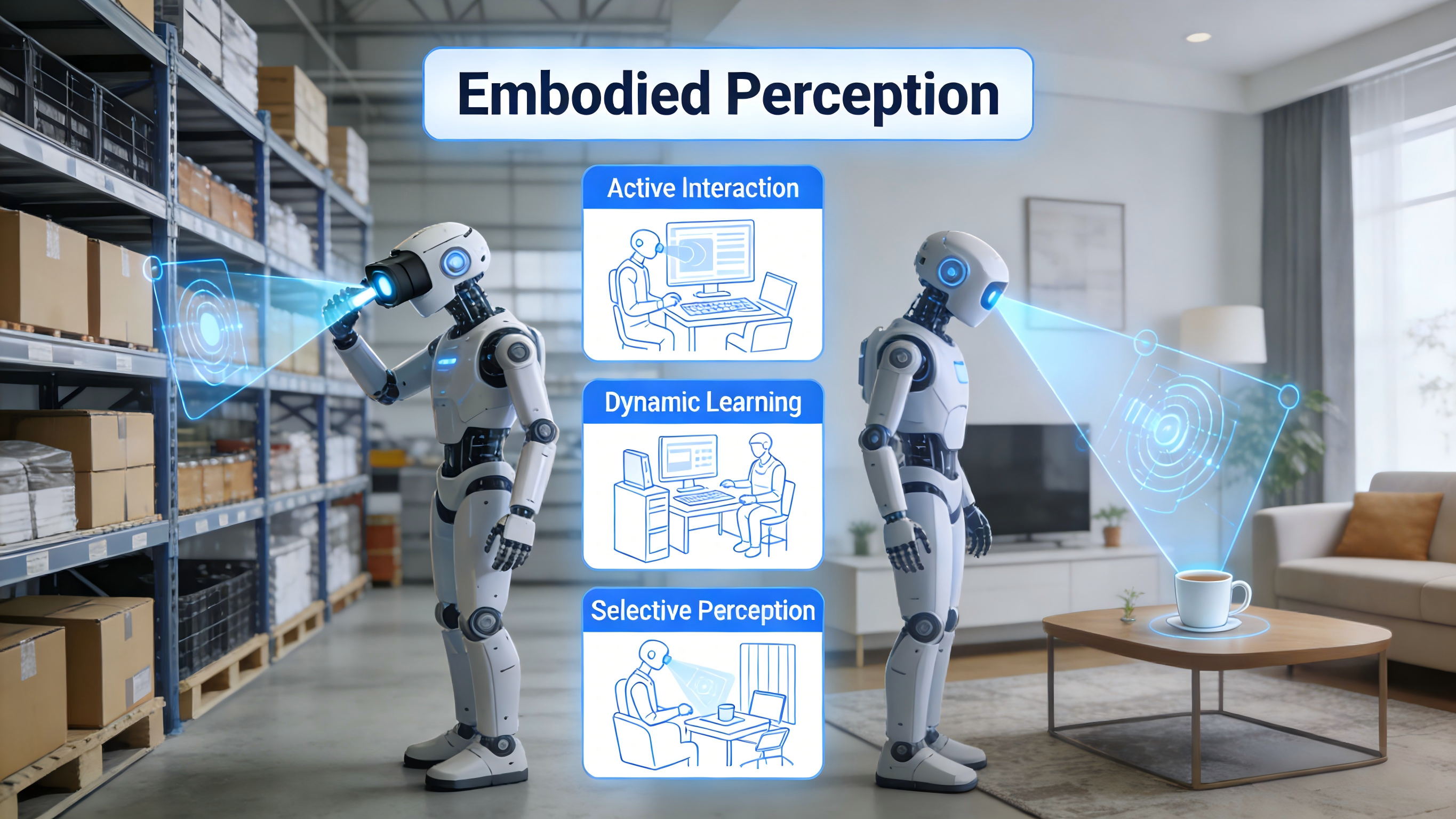

Building upon the core technologies of embodied perception and navigation, we further expand into related research areas such as multimodal fusion and system deployment. We aim to construct a complete research chain from 'basic theory – core technology – practical application,' promoting the transition of embodied AI technology from the laboratory to real-world scenarios.

Integration of Multimodal Large Models and Embodied AI

We explore the deep fusion mechanisms of multimodal information (e.g., language, vision, spatial) to enhance agents' understanding and decision-making capabilities. Research directions include MLLM-based instruction parsing for vision-language navigation, cross-modal map representation learning, and natural language-driven fine-grained perception.

Leveraging the knowledge transfer capabilities of multimodal large models, we strive to reduce system training costs and enhance zero-shot generalization capabilities.

World Model Pre-training and Safe Planning

We focus on agents' capabilities for environmental modeling and prediction, researching pre-training methods for general world models (e.g., the PreLAR framework) to enable accurate modeling of environmental dynamics and physical rules. Combined with reinforcement learning, we implement safe and feasible trajectory planning that ensures navigation efficiency while satisfying multiple constraints such as obstacle avoidance, speed limits, and comfort.

This research provides key technical support for safety-critical scenarios like autonomous driving and robotic navigation in complex environments.