Research

Our research group is dedicated to advancing the frontiers of embodied AI, aiming to build intelligent agents capable of autonomous perception, decision-making, and interaction within open, dynamic, and complex physical environments. We focus on transcending the limitations of traditional static perception by exploring the ‘perception–decision–action–feedback’ closed loop. Our goal is to develop more general, proactive, and efficient embodied AI systems that can flexibly adapt to the complex and ever-changing real world, achieving general, active, efficient environmental perception and precise navigation.

Our research efforts primarily revolve around the following three closely interconnected directions:

-

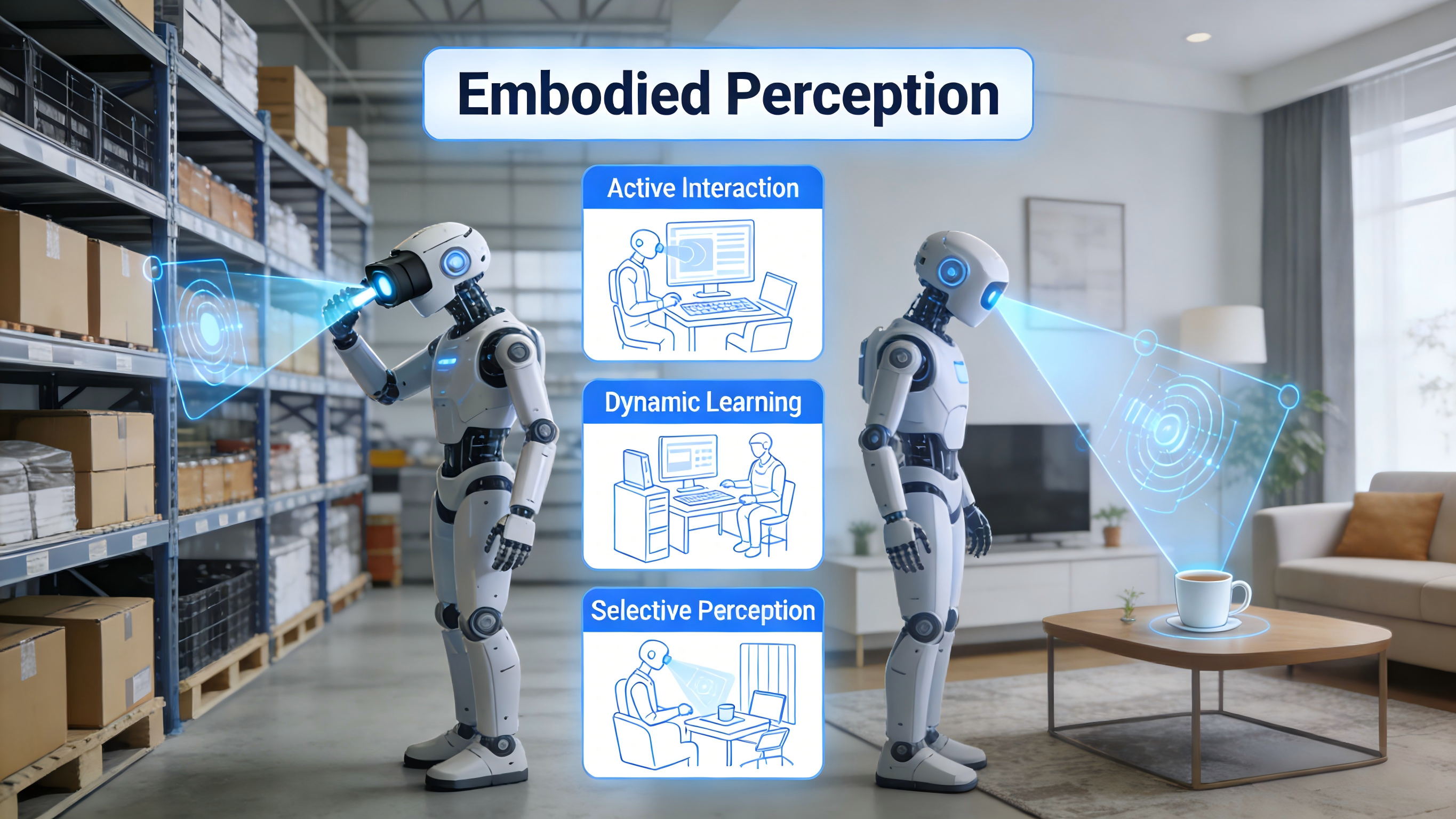

Embodied Perception Exploring how agents can acquire higher-quality perceptual information through active interaction with the environment, continuously learn new knowledge, and achieve efficient, selective perception based on task requirements. Core research directions include active visual perception, continual learning for perception, and task-driven visual perception.

-

Visual Navigation Investigating how agents understand natural language instructions, perform spatial reasoning, predict dynamic object motion, understand environmental affordances, and ultimately plan safe and efficient movement paths in complex scenes. Simultaneously, we focus on how agents can adapt to unknown environments through evolutionary learning.

-

Cross-Disciplinary Exploration Integrating cutting-edge technologies such as world models, vision-language navigation, and vision-language-action models to promote the development of next-generation embodied AI systems that are safer, more reliable, and possess zero-shot generalization capabilities.